The Complete Guide to Agentic AI in 2026: From Chatbots to AI Coworkers

The Complete Guide to Agentic AI in 2026: From Chatbots to AI Coworkers

A plain-English introduction to what AI agents are, how they work, and why everyone is suddenly talking about them.

A few years ago, the main question was simple: “What can ChatGPT answer?”

Then the question became: “What can AI help me write, summarize, code, or analyze?”

Now, in 2026, the question is shifting again:

What can AI actually do for me?

That shift is why everyone is talking about agentic AI.

The term sounds technical, maybe even a little overhyped. But the basic idea is simple:

A chatbot answers…. An AI agent acts.

A chatbot waits for your prompt. An AI agent can take a goal, break it into steps, use tools, check what happened, and continue working toward the result.

That does not mean AI agents are magic. It does not mean they are fully autonomous employees. And it definitely does not mean you should let them do anything without supervision.

But it does mean we are moving from AI that only talks to AI that can participate in workflows.

That is the real story.

1. What is agentic AI?

Agentic AI refers to AI systems that can pursue a goal with some degree of autonomy.

Instead of only generating an answer, they can:

- understand a task,

- make a plan,

- use tools,

- observe results,

- adjust the next step,

- and sometimes ask a human for approval.

A normal chatbot might answer:

“Here is how you can write a customer complaint summary.”

An AI agent could do something closer to:

“I found the latest customer complaints, grouped them by topic, detected the most urgent issues, drafted a summary, and highlighted the cases that need human review.”

The difference is not just intelligence. The difference is action.

A chatbot gives you a response.

An agent moves through a task.

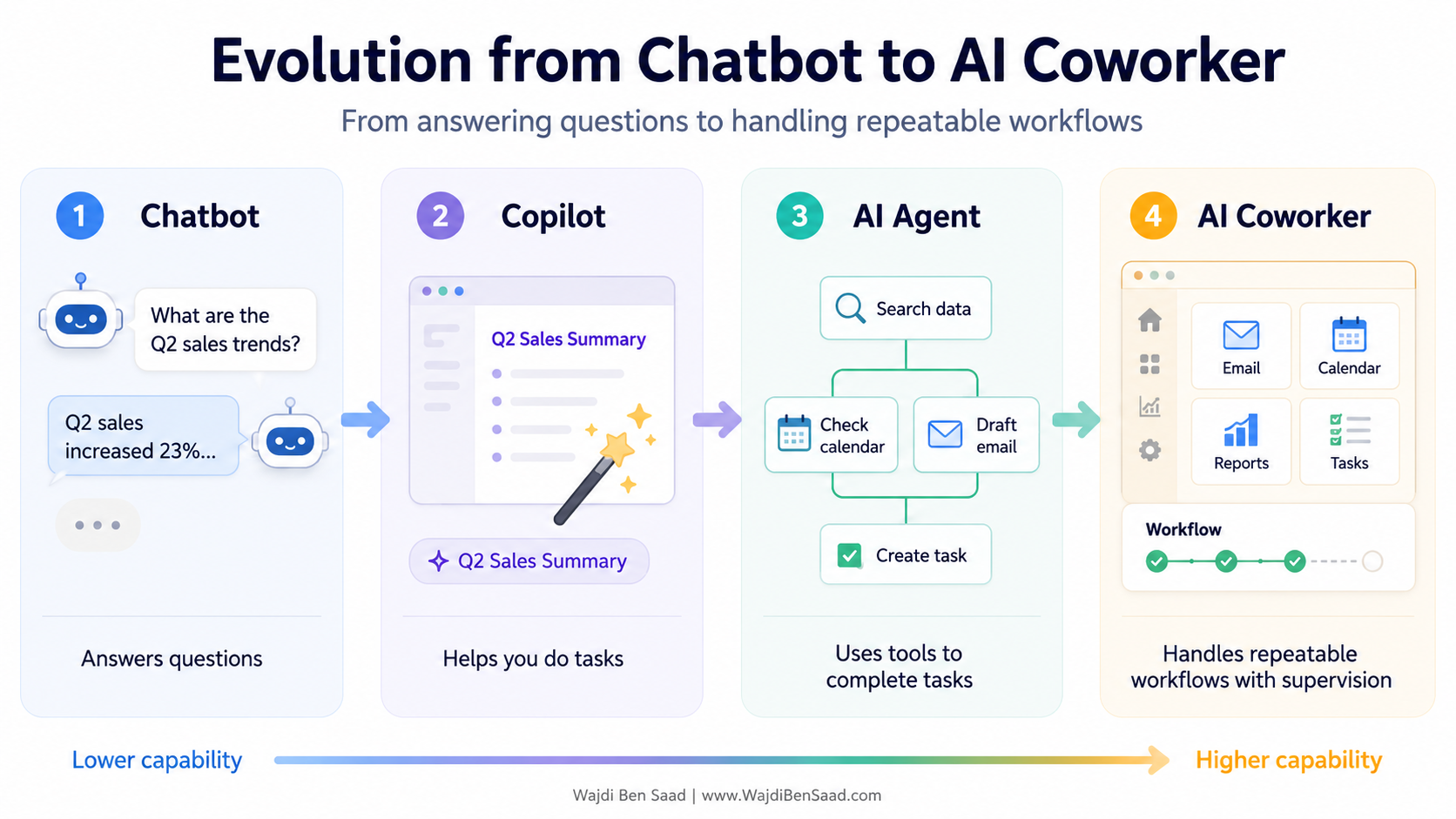

2. Chatbot, copilot, agent, AI coworker: what is the difference?

A lot of confusion comes from the fact that people use these words interchangeably. They are related, but they are not the same.

Well, the most important distinction is this:

Chatbots are conversational. Agents are operational.

A chatbot is useful when you need an explanation, a draft, or an idea.

An agent is useful when the task has several steps and requires tools. For example, searching the web, reading documents, querying a database, creating a calendar event, writing code, or sending a draft for approval.

An AI coworker is the more ambitious version. It is not just a one-time assistant. It is an agentic system that can handle a recurring workflow, with rules, memory, supervision, and clear boundaries.

The word “coworker” is useful, but we should be careful with it. AI agents are not people. They do not understand responsibility. They do not have judgment in the human sense. They are software systems that can perform parts of work.

A better mental model is:

An AI coworker is a workflow engine with language, reasoning, tools, memory, and supervision.

3. How does an AI agent work?

The simplest way to understand an AI agent is to think of a loop.

That is the heart of agentic AI.

Imagine you ask an agent:

“Prepare a short briefing on the latest trends in electric vehicles.”

A chatbot might give you an answer from its general knowledge generate and provide text based on what is stored in its immediate retrieval memory..

However, An agent could have extra capabilities like:

- search recent sources,

- compare several articles,

- extract recurring themes,

- summarize the findings,

- organize them into a briefing,

- mention which sources were used,

- ask you whether you want a longer version.

The agent is not just answering. It is moving through a process in a structured way. And that is why tool use is so important. Without tools, the agent is mostly trapped inside a text box. With tools, it can interact with the outside world.

4. What can AI agents actually do in 2026?

The useful question is not “Will agents replace everyone?”

The useful question is:

Which boring, repetitive, multi-step tasks can they help with?

Well, let me give you here are 10 practical examples, this is really a limited list to get you started, the agentic world is an ocean of usecases!

- Email: Summarize important messages and draft replies

- Meetings: Turn notes into action items and follow-ups

- Research: Read sources and create a short briefing

- DataAnalyze a spreadsheet and explain patterns

- Coding: Read code, suggest fixes, write tests

- Customer support: Triage tickets and escalate urgent cases

- Marketing: Compare competitors and draft campaign ideas

- Travel: Compare flights, hotels, dates, and constraints

- Operations: Monitor recurring issues and prepare reports

- Personal admin: Organize reminders, documents, and tasks

The common pattern is simple:

Agents are useful when a task has steps, tools, context, and a clear result.

They are less useful when the task is vague, risky, emotional, strategic, or impossible to verify.

For example, an agent can help draft a customer reply. But should it send a sensitive legal response without review? Probably not.

An agent can summarize a medical file. But should it make the final diagnosis? No.

An agent can analyze sales trends. But should it decide your company strategy alone? Definitely not.

The best agents are not unsupervised geniuses. They are supervised helpers.

5. What people get wrong about agentic AI

Because the term is everywhere, it is easy to misunderstand it.

Here are four things worth remembering.

a. Agents are not magic

An agent is still software. It depends on the model, the tools, the instructions, the data, and the workflow around it.

Bad data in, bad result out. Bad instructions in, messy behavior out.

b. Agents are not always autonomous

The best systems often include human approval.

For example:

- the agent drafts the email,

- the human approves it,

- then the system sends it.

That is not a weakness. That is good design.

c. More agents is not always better

“Multi-agent system” sounds impressive, but five agents talking to each other can also create confusion, cost, and errors.

Sometimes one well-designed agent is better than a team of agents arguing in circles.

d. Agents still fail

They can hallucinate. They can misunderstand the goal. They can call the wrong tool. They can get stuck in loops. They can expose private data if permissions are badly designed.

That is why the golden rule is:

The more freedom an agent has, the more supervision it needs.

6. So, is an AI agent really a coworker?

Yes and no.

It is a coworker in the sense that it can participate in work. It can take a task, follow steps, use tools, and produce something useful.

But it is not a coworker in the human sense. It does not understand your company culture. It does not carry responsibility. It does not know when something is politically sensitive unless the system was designed to handle that.

So the best way to think about it is this:

An AI agent is like a very fast junior assistant with tools, memory, and instructions. Useful, sometimes impressive, but still needing review.

That is not a bad thing. Most useful technology works because we understand its limits.

Spreadsheets are powerful, but we still check formulas, right?

Search engines are useful, but we still evaluate sources ( I meant everybody should do that!)

AI agents will be the same. Their value will come from how well we design, supervise, and improve the workflows around them.

7. The simple mental model to remember

If you remember only one thing from this article, remember this:

Agentic AI is not about AI becoming human.

It is about AI becoming part of a workflow.

The shift is from asking:

“Can AI answer this?”

To asking:

“Can AI help complete this process?”

That is why agentic AI matters.

Not because every agent is ready to replace a human.

Not because every business needs a multi-agent system.

Not because the hype is always justified.

But because a growing part of digital work can be described as goals, steps, tools, checks, and approvals.

And that is exactly where agents fit.

The future of work is not only about prompting harder. It is about learning how to design better workflows with AI inside them.

That is the real move from chatbots to AI coworkers.

If this helped you finally understand what “agentic AI” means, save it for later. In the next part, we can go one level deeper and look at what is inside an AI agent: tools, memory, planning, guardrails, and human approval.